Yesterday we looked at using PowerShell Events to track asynchronous backups to fire off concurrent backup sessions from the same script. All of those backups were being run independently of each other, so what would you do if you want to run batches of backups concurrently (ie; All dbs on Server A and all dbs on Server B concurrently)?

That’s where PowerShell Jobs come in. Introduced in PowerShell 3.0 they let you fire off a block of script and have it run in the background. You can then wait for the jobs to finish, or poll for them yourself.

We’ll use the example above of wanting to backup all the databases on 2 SQL Server installs concurrently:

$JobFunction={function backup_server_dbs

{

param([String]$ServerName)

Import-Module "SQLPS" -DisableNameChecking

$SQLSvr = New-Object -TypeName Microsoft.SQLServer.Management.Smo.Server($ServerName)

$Db = New-Object -TypeName Microsoft.SqlServer.Management.Smo.Database

foreach ($Db in $SQLSvr.Databases | Where-Object {$_.Name -ne "tempdb"}){

$Backup = New-Object -TypeName Microsoft.SqlServer.Management.Smo.Backup

$Backup.Action = [Microsoft.SQLServer.Management.SMO.BackupActionType]::Database

$BackupSetDescription = "Full Back of "+$Db.Name

$Backup.Database = $Db.Name

$BackupName = "c:\psbackups\"+$Db.Name+"_"+[DateTime]::Now.ToString("yyyyMMdd_HHmmss")+".bak"

$DeviceType = [Microsoft.SqlServer.Management.Smo.DeviceType]::File

$BackupDevice = New-Object -TypeName Microsoft.SQLServer.Management.Smo.BackupDeviceItem($BackupName,$DeviceType)

try{

Backup-SqlDatabase -ServerInstance $ServerName -Database $Db.Name -BackupFile $BackupName -BackupSetDescription $BackupSetDescription

}

Catch [System.Exception]{

$output += "`nError with $db.name at "+(get-date)

$output += ($error[0].ToString())

$error =1

}

Finally{

if ($error -ne 1){

$output += "`n Finished backup of $db.name at "+(get-date)

}

$error = 0

}

}

return $output

}

}

$servers = @("Server1","Server2")

$jobs = @()

foreach ($servername in $servers){

$jobs += Start-job -ScriptBlock {backup_server_dbs $args[0]} -InitializationScript $JobFunction -ArgumentList($servername)

}

$jobs | Wait-Job

$FullOuput = ""

foreach ($job in $jobs){

Receive-Job $job | Tee-Object -Variable joutput

$FullOuput += $joutput

Remove-Job $job

}

Write-Output $FullOutput

This script starts off a little differently to every backup script we’ve looked at so far in this series. What we do first is to create a variable $JobFunction which holds a function definition for a function backup_server_dbs. The reason for this will be explained slightly later on in the script.

backup_server_dbs accepts one paramater, a string called $ServerName. And then it should look pretty famaliar from our previous articles. We build a SQL Server connection, loop through all of it’s databases (ignoring poor TempDB) and backing them up. The main difference it the inclusions of a Try, Catch, Finally block around the actual backup. If you’ve not come across this before, it’s a simple way of handling errors in PowerShell. At a high level we are:

- Try: Try doing something

- Catch: If “trying” has caused a error, Catch it

- Finally: Whatever happened when trying, Finally we’ll do this

So here we Try to perform the backup, if there’s an error we Catch it and add the error message to our output sting, and Finally if we’ve had no errors we append the completion time to our output string.

Once we’ve attmempted to backup all of our databases we exit the function returning out $output variable.

Moving on from the function we set up 2 array variables, the first $Servers holding our server names, and the 2nd one is unpopulated but is going to hold the jobs we create to run the backups.

Using foreach we loop through each server we’ve provided, and start a new job for each one with the Start-Job cmdlet.

And this is the reason we created a variable holding a function. This is so we can pass it into Start-Job as an InitializationScript and then call the function contained within it in the ScriptBlock parameter. A slightly cleaner way of doing this is by using a secondary PowerShell script file and passing the file location in to InitializationScript, but that’s a little hard to show in a simple blog posting, and this way also keeps everything synced in one file.

Once we’ve submitted the jobs we pass the array containing them ($jobs) into Wait-Job. This pauses execution until all the jobs in $jobs have completed.

Once all the jobs have completed we ForEach through echo $job in the $jobs array, and use Receive-Job cmdlet to get the return information from the job, pass it throught Tee-Object to grab the $output into a temporary holder, and then append to a full output variable, and then we pass it to Remove-Job to clear it from the jobs list

And finally we output the Success and Error Messages back to the console.

This basic approach can be used for anytime you want batches of work to be running at the same time. By extending the function to take a Filegroup name and a Backup location you could backup each filegroup to a different set of disk therebay maximising your throughput by not just hitting a single controller, NIC or HBA:

Import-Module SQLPS -DisableNameChecking

$JobFunction={function backup_server_dbs

{param([String]$ServerName, [String]$DatabaseName, [String]$FileGroup, [String]$BackupLocation)

Import-Module "SQLPS" -DisableNameChecking

$BackupSetDescription = "Filegroup backup of Filegroup "+$FileGroup+" of "+$DatabaseName

$BackupName = $BackupLocation+"\"+$DatabaseName+"_"+$FileGroup+"_"+[DateTime]::Now.ToString("yyyyMMdd_HHmmss")+".bak"

try{

Backup-SqlDatabase -ServerInstance $ServerName -Database "fg_test" -BackupFile $BackupName -BackupSetDescription $BackupSetDescription -DatabaseFileGroup $FileGroup -BackupAction Files

}

Catch [System.Exception]{

$output += "`nerror with $DatabaseName at "+(get-date)

$output += "`n vars = $servername, $DatabaseName, $BackupName, $BackupLocation"

$output += ($error[0].ToString())

$error =1

}

Finally{

if ($error -ne 1){

$output += "`n finished backup of $DatabaseName "+(get-date)

}

$error = 0

}

return $output

}

}

$servername = "Server1"

$DatabaseName = "fg_test"

$BackupLocations = @("c:\psbackups\fg1","\\Server2\shareA","\\Server3\ShareB")

$jobs = @()

$i=0

$SQLSvr = New-Object -TypeName Microsoft.SQLServer.Management.Smo.Server($ServerName)

$Db = New-Object -TypeName Microsoft.SqlServer.Management.Smo.Database

$db = $SQLSvr.Databases.Item($DatabaseName)

foreach ($FileGroup in $db.FileGroups){

$jobs += Start-job -ScriptBlock {backup_server_dbs $args[0] $args[1] $args[2] $args[3]} -InitializationScript $JobFunction -ArgumentList ($servername,$DatabaseName, $Filegroup.Name, $BackupLocations[$i])

$i++

}

$jobs | wait-job

$FullOuput = ""

foreach ($job in $jobs){

receive-job $job | Tee-Object -Variable joutput

$FullOuput += $joutput

Remove-Job $job

}

Write-Output $FullOuput

If you were writing the above script for production use, you’d probably want to include a check to ensure you’ve provided as many backup locations as you have filegroups.

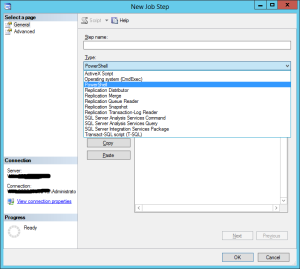

Tomorrow we’ll be looking at scheduling PowerShell Tasks.

This post is part of a series posted between 1st September 2013 and 3rd October 2013, an index for the series is available here.