Nice quiet day in the office, busily cracking through the To Do list when suddenly something pops into the ticketing system as a P1. Data has gone ‘missing’ from a corporate system, and there’s nothing in the audit trail about when it went missing or how it went missing. For how, read ‘Whodunnit’!

Now if there’s nothing in the audit trails, and noone is confessing then that doesn’t leave me with very much to go on. A slight break through is that someone clearly recalls seeing the data at 09:45 on the 3rd of Febuary, but that’s as good as it gets. We’re now on the 6th Febuary, so that’s a window of just over 3 days it could have gone missing.

Now, as any DBA knows, SQL Server comes equipped with a bit in ‘audit’ for any operation that modifies data. It’s just awkward and complex to read and search.

The name of that audit is the Transaction Log. SQL Server won’t do any data modification without it being written into the log, as SQL Server doesn’t count the transaction committed until it’s logged as it needs it for recovery after a restart.

The transaction log holds a LOT of information. Searching 3 days worth of it would be like looking for something in a hay stack. And at the moment we don’t even know if that’s a needle, a pin or an apple seed

An aside about reading Transaction Logs

SQL Server comes with 2 built in, but undocumented, functions fn_dblog and fn_dump_dblog. Undocumented means Microsoft don’t publish documentation and reserve the right to modift them without notice. But they’re a pretty open secret, and there’s pleny of info out there that

For the purposes of this post, you just to need to know this about them:

- fn_dblog reads the current transaction log

- fn_dump_dblog reads transaction log backups

If you’re on a SQL version lower that SQL Server 2014 or SQL Server 2012 SP2 then there is a know bug that uses up threads and can cause hanging.

Finding the time of deletion

So the first thing to do is to is to work out a time window when the data went missing. This was going to be a tedious process, so lets automate it. If we’re automating a SQL Server task, then dbatools becomes the obvious answer.

The plan is:

- Restore the database the last time the data was seen

- Roll forward in 6 hour increments until the data disappears

- Roll forward in 30 minute steps from the beginning of the last 6 hour lost until the data disappears

- Roll forward from the 30 minute start to the disappearance of the data in 5 minute increments

So we now have a 5 minute window we need to search in the transaction log backup, which is much better than 72 hours

To speed things up we’ll scan all the backup headers first and save them into an object which we can reuse through out the process. We’ll also rename the database, and change the filenames on restore. This is because we are restoring the database onto the instance it came from, the reason we have to do this will become clearer later on

We also gather some extra information about the missing rows, which I’ll explain in a moment.

# Time to start Restoring from

$startTime = get-date ('03/02/2020 09:45')

# The time in minutes between restore points

$windowSize = @('720','30','5')

# Used to track how far through the windows we are

$counterLoop = 0

# Query to test if the object has been deleted during the window

# We also gather some internal SQL Server page information we'll need later

$sqlQuery = 'select db_id(), sys.fn_PhysLocFormatter(%%physloc%%),* from dbo.Objects where ObjectID=''1'''

# Scan all the backup headers first to speed things up

$backups = Get-DbaBackupInformation -Path c:\RestoredBackps -SqlInstance MyInstance

# Set a variable to tell restore whether to start a new restore, or to continue

$restoreContiue = $false

while ($counterLoop -lt $windowSize.count){

$backups | Restore-DbaBackup -SqlInstance MyInstance -DatabaseName RestoreDb -ReplaceDbNameInFile -TrustDbBackupHistory -RestoreTime $startTime.AddMinutes($windowSize[$loopcount]) -Standby c:\Standby -Continue:$restoreContiue

#Prevent the old page information from being overwritten

$oldResult = $sqlResult

$sqlResult = Invoke-DbaQuery -SqlInstance MyInstance -DatabaseName RestoreDb -Query $sqlQuery

if ($sqlResult.count -eq 0){

# If we get no results, we're past when the data has disappeared

# So, move to the next loop and start a new restore

$counterLoop++

$restoreContiue = $false

} else {

# If data still there, start the next increment

$startTime = $startTime.AddMinutes($windowSize[$counterLoop])

$restoreContiue = $true

}

}

Write-Host "Data disappeared between $startTime and $($startTime.AddMinutes($windowSize[$counterLoop]-1))`n"

Write-Host "Missing data was on the following pages:`n"

$oldResult

Now we know when the data disappeared, now we just want how

Searching the Transaction Logs

SQL Server Transaction logs store a LOT of information, so querying them will return more information that you really want. Also the information in the Transaction Log is for the benefit of SQL Server, so not all of it is easily readable for us humans

For instance, when we’re looking for a specific missing row the easiest way is to search for transaction log records that modified the physical location the row existed on. While the actual row data and SQL details are in the log, they’re encoded, so getting to them is a lot of work.

We’re looking for a specific row in a specific table so we need the following 3 bits of information to identify it’s physical location:

- Datafile ID

- Page ID

- Slot ID

These can be found for a specific row with the following query using the fn_PhysLocFormatter function which handily returns the physical location of a row in a table:

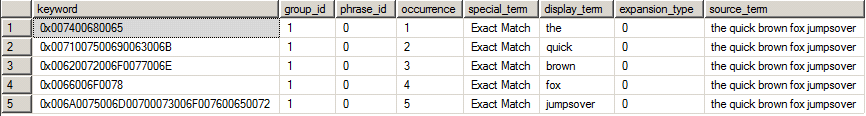

select db_id(),sys.fn_PhysLocFormatter(%%physloc%%) from dbo.Objects where ObjectID='1'

This will give you result like:

| db_id | File:Page:Slot |

| 5 | (1:232:58) |

The reason we were saving this from the previous iteration of the loop is because it wouldn’t exist once the data had been deleted.

Now all we need to do is to search the transaction log. However, there’s one more small step before we can do that. In the transaction log the FileID and PageID are stored as fixed length hexadecimal values, and we’ve just pulled them out in decimal format.

I convert them with PowerShell as it’s a little easier for me to remember:

PS C:\ '{0:x4}' -f 1

0001

PS C:\ '{0:x8}' -f 336

00000150

Note how the FileID and PageID are padded out to 4 characters and 8 characters respectively. This query will return all the transactions that affected that slot on that page in that file. Depending on how busy that page is that might be a lot.

select * from fn_dblog(NULL,NULL) where [Page ID]='0001:00000150' and [Slot ID]='58'

Fiding out who and when

You’ll instantly find out just how much information there is in a transaction log record. So let’s trim down the data a bit much to a couple of things we want to know:

- Who issued the command

- Exactly when it was run

And also limit it to just delete records:

select [Transaction ID],[Begin Time],[Transaction SID] from fn_dblog(NULL,NULL) where [Page ID]='0001:00000150' and [Slot ID]='58' and Operation='LOP_DELETE_ROWS

If you’re lucky and this returnselect [Transaction ID],[Begin Time],[Transaction SID] from fn_dblog(NULL,NULL) where [Page ID]=’0001:00000150′ and [Slot ID]=’58’ and Operation=’LOP_DELETE_ROWSs a single row, you’ll find that only [Transaction ID] is populated as the other goodies are recorded at the Transaction wrapper level, not the statement level, so we take the Transaction ID and use that:

select [Transaction ID],[Begin Time],suser_sname([Transaction SID]) from fn_dblog(NULL,NULL) where [Transaction ID]=’0000:000003ba’

select [Transaction ID],[Begin Time],suser_sname([Transaction SID]) from fn_dblog(NULL,NULL) where [Transaction ID]='0000:000003ba'

This returns all the rows for the specified transaction in the transaction log. You’ll have 2 rows for the Transaction wrapper, the BEGIN and the COMMIT, these will be the first and last row.

The rows in between will be the actual deletion records. You might have one or more depending how much data was in the row, or if it was part of a delete than remove multiple rows.

The BEGIN transaction record contains the information you wanted:

- [Begin Time] gives you the time the delete started

- [Transaction SID] gives you the Security Identifier of the account that executed the transaction.

We user SUSER_SNAME to convert the SID to a username. This is the reason I mentioned that you want to do this on the same instance as the original database was on, this is to make sure the SIDs match up and you get the right name!

Conclusion

As you can see, everything you’d ever want to know about what happened in SQL server is in the transaction log. But it’s not an easy beast to work with, much better to look at better methods to save you having to do this.

It’s time for another T-SQL Tuesday. This month Wayne (

It’s time for another T-SQL Tuesday. This month Wayne (